Loading lesson…

Evolution

For most of human history, what you could learn was entirely determined bywhere you were born and who happened to be standing near you.

Knowledge lived inside people. The only technology available to move it was time and proximity.

A master glassblower in 14th century Venice didn't write a manual. He let you watch. For years. And when he decided you were ready, he let you try.

The apprenticeship model wasn't slow because people were lazy or systems were corrupt —it was the only available mechanism for knowledge transfer.

The ancient world had its exceptions — Plato's Academy in Athens, the great Islamic madrasas, the imperial examination systems of China, the Library of Alexandria.But these served tiny fractions of their populations.

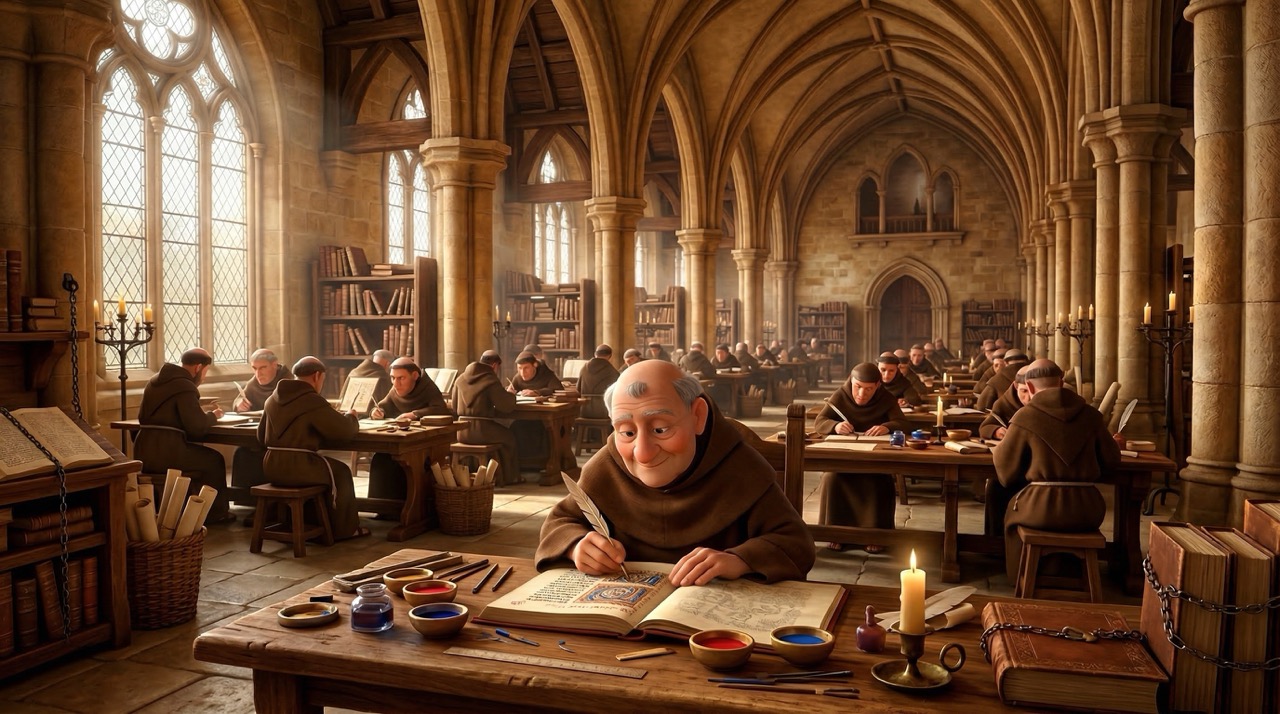

Medieval Europe adds a huge piece we haven't named yet: the monastery.

For centuries,monks and convents were where fragile knowledge lived on paper— scriptoria where texts were copied by hand, commented on, and guarded.

Religion didn't only fund learning —it decided what counted as truth worth preservingand who was fit to read it. Cathedrals and monastic schools widened the circle slightly for some boys of means, butthe design was still exclusionary: spiritual vocation, patronage, or birth.

The Middle Ages were slow not because nothing was happening — but because knowledge was chained to institutions, geography, and sacred order.That pattern matters when you later watch industrial trainers try to break the same dependencies with media, standards, and scale.

Literacy itself was a privilege. Formal education was a class marker, not a human right.

The first formalized job instruction methods appeared in the late 1800s —crude, inconsistent, and entirely without scientific foundation.

The single most important event in the history of Instructional Design is World War II.

The United States alone needed to train over 12 million military personnel — plus millions more factory workers —in a timeframe that made traditional education completely irrelevant.

For the first time in history,learning was treated as an engineering problem.

What emerged from this period was foundational:

Job Task Analysis —the practice of systematically breaking complex jobs into discrete, observable, teachable steps.

Before you can teach something, you have to map it.

Criterion-Referenced Testing —measuring whether someone can perform to a fixed standard— notwhere they land on a curvenext to peers.

Once you’ve broken a job into teachable steps, you still have to decidewhat “good enough” means.

That wartime scale rejected the old habit ofranking peoplewhen the military needed a binary that admins could trust:can this person do the required performance, yes or no.

Test Type

What are we comparing you to?

What does the score look like?

Best used for...

Norm-Referenced

Other test-takers

Percentile rank (e.g., 85th percentile)

Selecting the top candidates for a limited spot.

Criterion-Referenced

A specific standard or cut-off

Pass/Fail or Mastery/Non-Mastery

Checking if a vital skill is safe and ready to use.

Domain-Referenced

A strictly defined universe of knowledge

Percentage of the domain mastered (e.g., 90% of the topic)

Diagnosing exact strengths and pinpointing the next steps for learning.

Norm,criterion, anddomainframing answer different questions — wartime throughput leaned hardest oncriterion

The U.S. military produced over 400 training films during the war —one of the first large-scale uses of media for training.

The "master" didn't have to be physically present.The master could be captured, reproduced, and distributed.

The psychologists involved in this work learned more about applied learning science in five yearsthan the previous century had produced.

When the urgent war work wound down, the pressure didn’t. Factories, schools, and corporations still had to move millions of people tonew competence, fast.

The fieldnever collapsed into one grand theory. Instead itfanned outward— a family ofmovable ideasyou still borrow, blend, or even pit against each other on purpose when the context demands it.

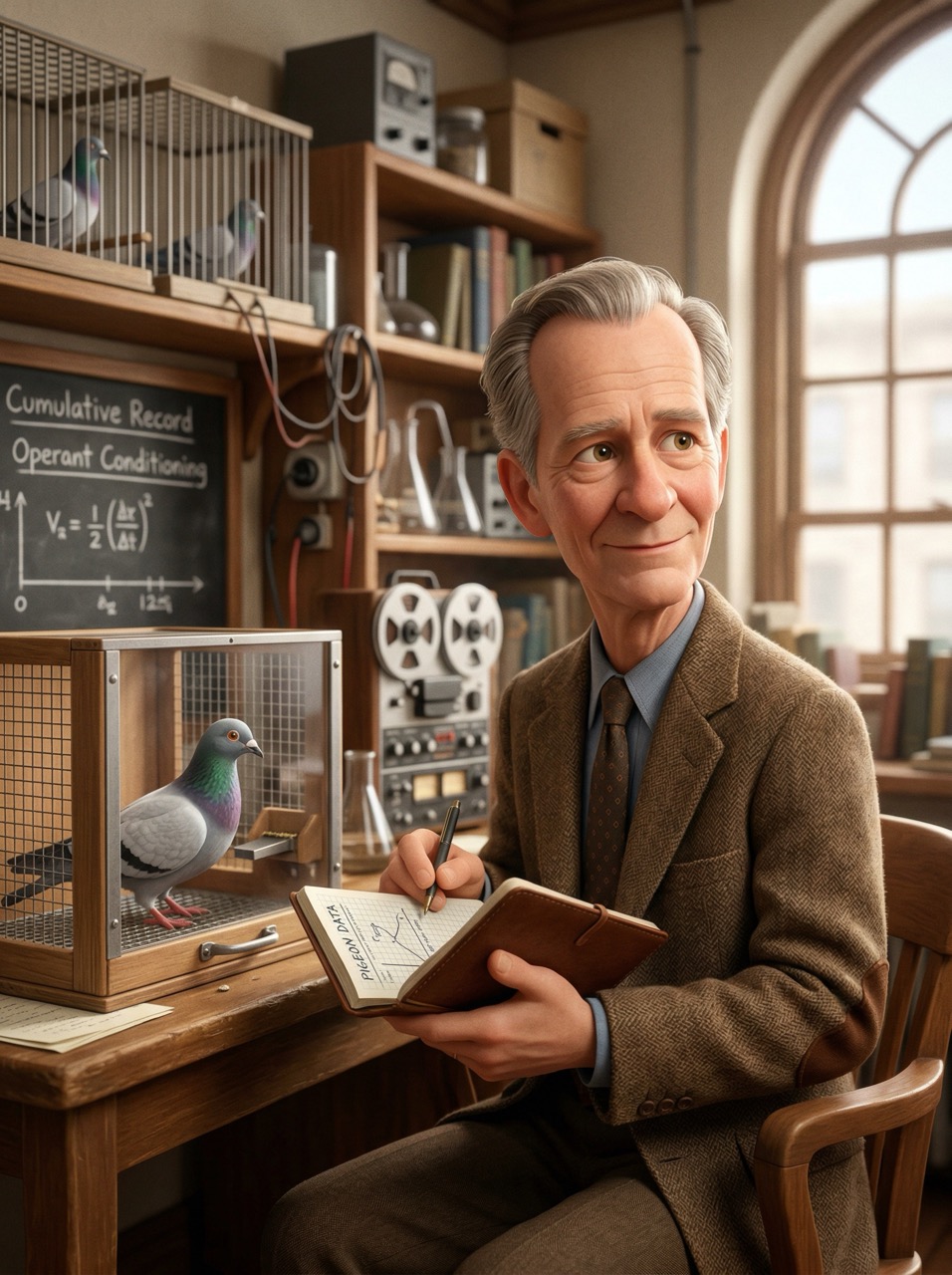

B.F. Skinner — made reinforcement, feedback, and repeated practice visible enough to engineer reliable behavior.

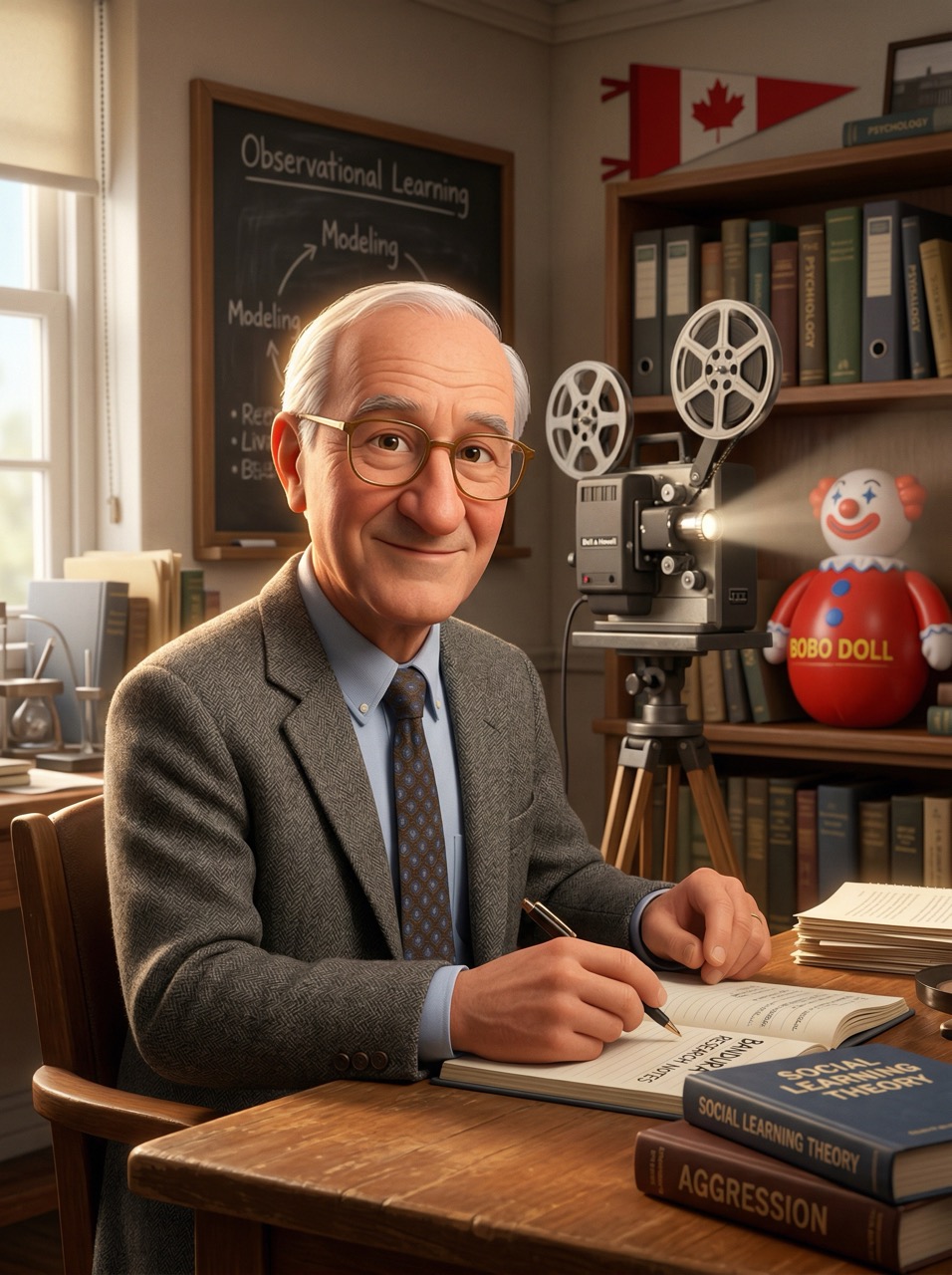

Albert Bandura — showed that people learn by watching models, consequences, and social cues, not only by direct reward.

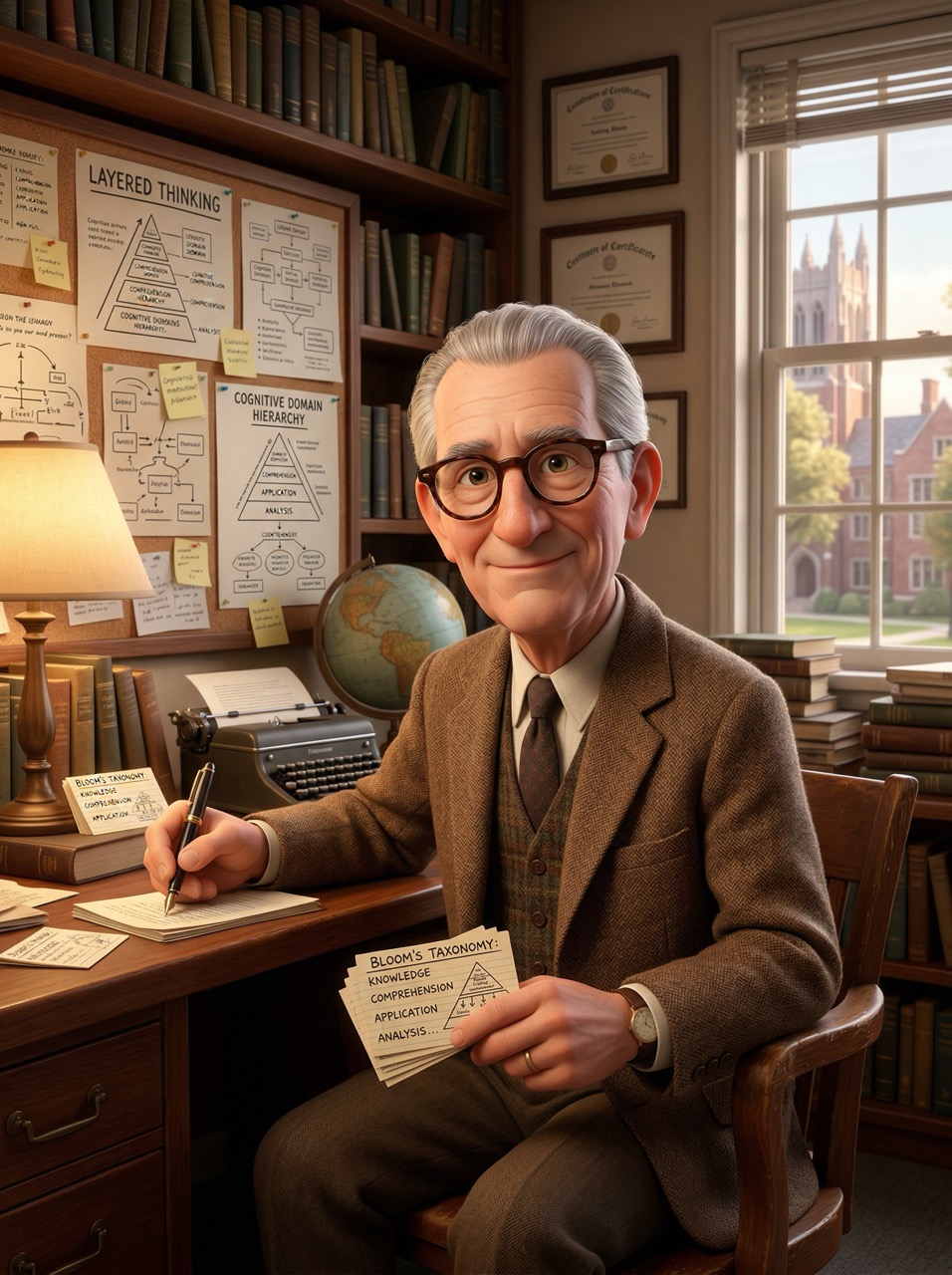

Benjamin Bloom — gave educators a shared language for levels of thinking, so “learning” could mean more than recall.

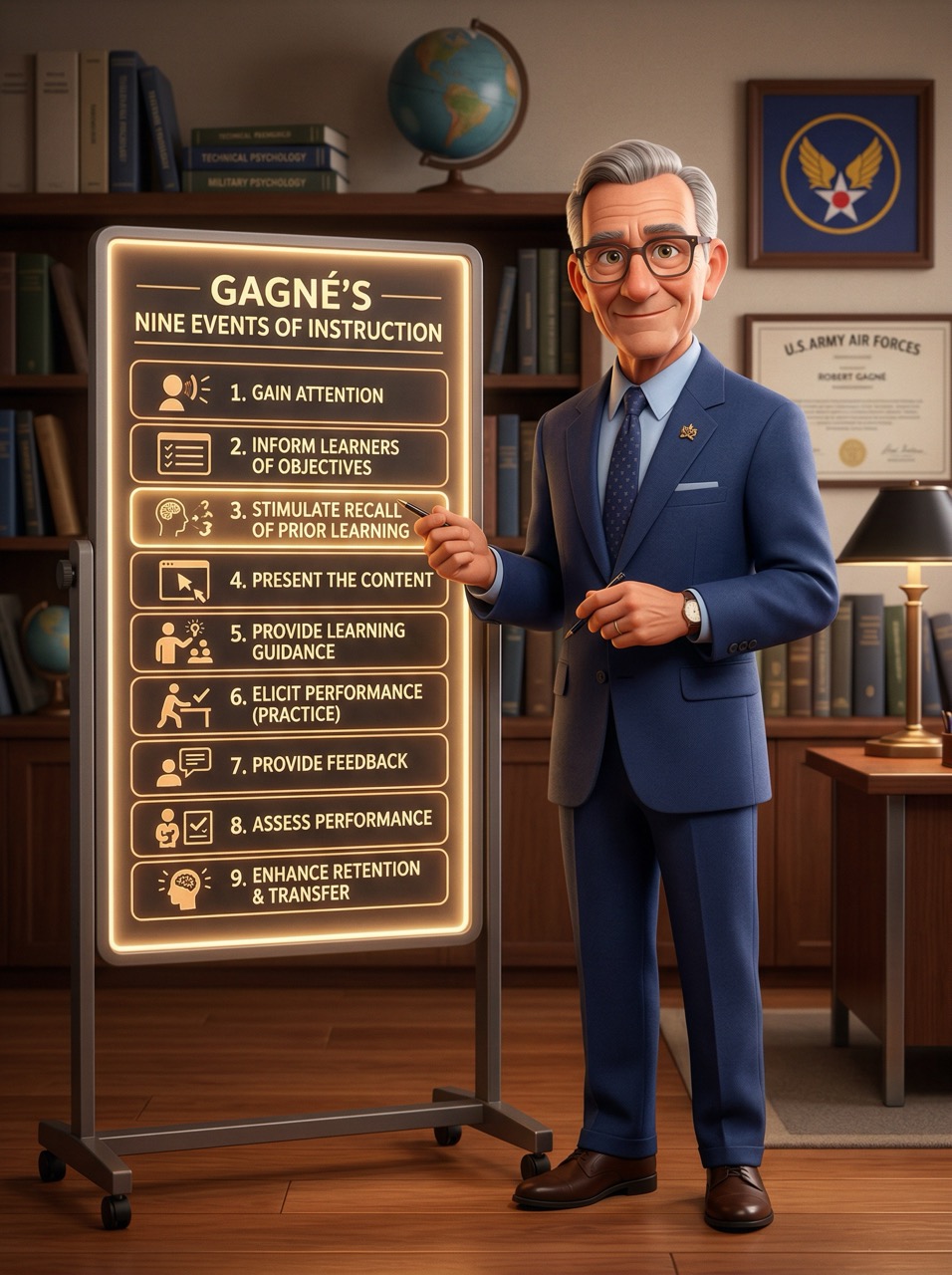

Robert Gagne — mapped instruction as a sequence of events, showing that order and support are part of the design itself.

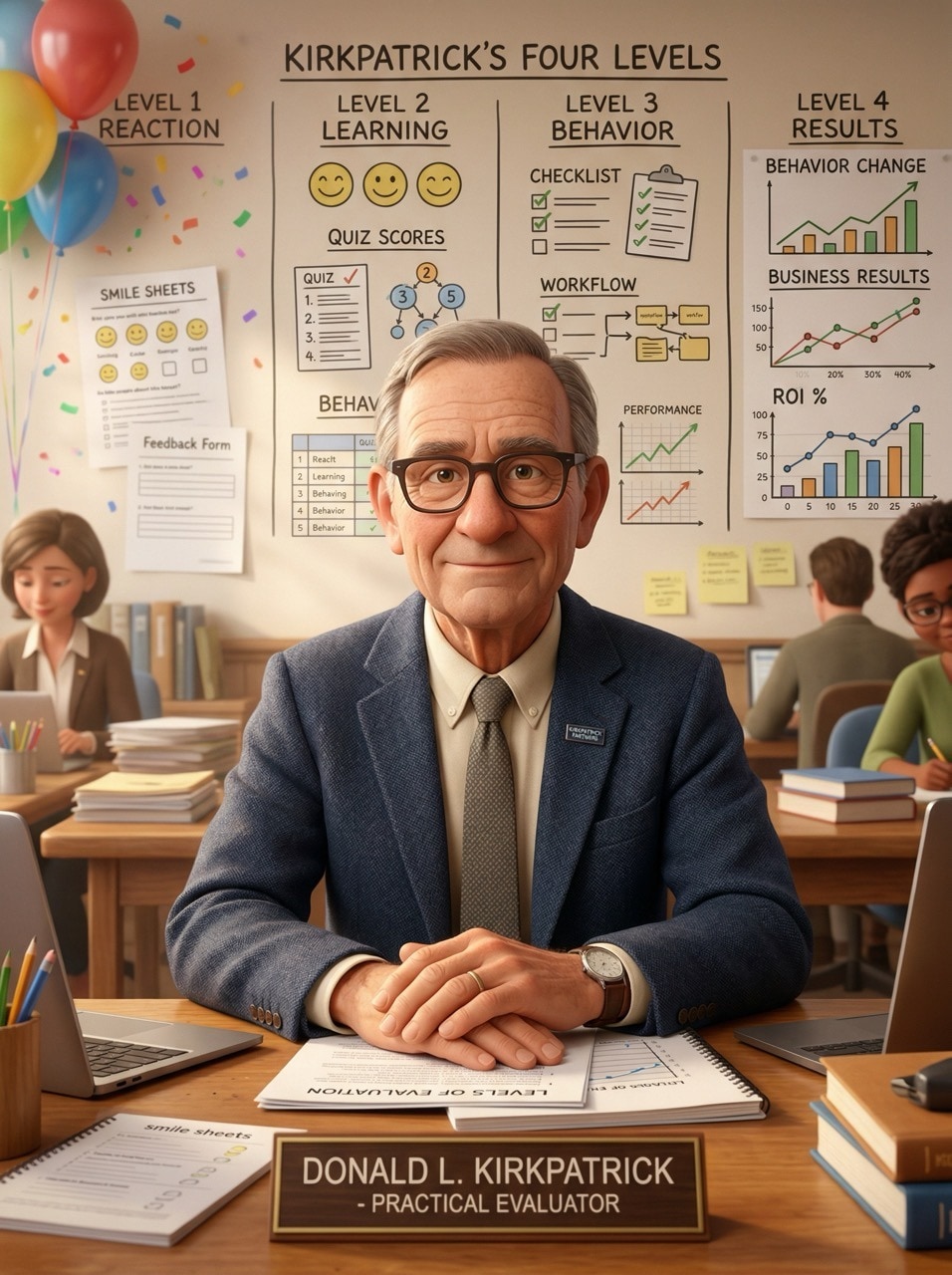

Donald Kirkpatrick — forced evaluation past happy reactions toward evidence of learning, behavior change, and real results.

John Sweller — showed that working memory is limited, so instruction must reduce unnecessary load and manage complexity carefully.

Lev Vygotsky — argued that learning is social and scaffolded, with growth happening in the space between solo ability and guided help.

Malcolm Knowles — pushed adult learning toward relevance, experience, autonomy, and problem-first design instead of schoolroom habits.

Robert Glaser — helped shift assessment toward clear performance criteria, asking whether learners can do the job rather than merely rank above peers.

Hello world?

From the 1970s onward, networked computing kept expanding how learning could be delivered. First through early digital networks. Then through the web. Then through MOOCs, tech certificates, open video, and now AI.

But the biggest change was not delivery. It was competition.

Once learning lived on the internet, it had to compete with everything else there: email, games, news, chat, and endless video. A course was no longer compared only to other courses. It was compared to every other click.

That changed the designer's job.

Instructional design could no longer stop at making content accurate and organized. It had to become experience design. The question shifted from "how do we transfer information?" to "how do we create something worth staying in?"

The learner became a user in an interface.

And now AI pushes that shift even further.

For the first time, it is possible to give each learner something like a personalized tutor: adaptive, immediate, and tireless. In that sense, AI brings back apprenticeship at scale.

But that does not make instructional design less important. It makes it more important.

If AI can explain, respond, adjust, and generate practice on demand, then the designer's job moves upstream: deciding what matters, in what order, for whom, and under what conditions.

If a learning experience is not worth choosing, it will not be chosen.

If AI can teach almost anything, then deciding what should be taught becomes the real work.